Gcp composer airflow 2.012/20/2023

This clears your work and removes the project.Ĭloud Shell is a virtual machine that contains development tools. Note: Do not click End Lab unless you have finished the lab or want to restart it. If you use other credentials, you'll receive errors or incur charges.Īccept the terms and skip the recovery resource page. You will use them to sign in to the Google Cloud Console.Ĭlick Use another account and copy/paste credentials for this lab into the prompts. Note your lab credentials ( Username and Password). You can restart if needed, but you have to start at the beginning. Note the lab's access time (for example, 1:15:00), and make sure you can finish within that time. Sign in to Qwiklabs using an incognito window.

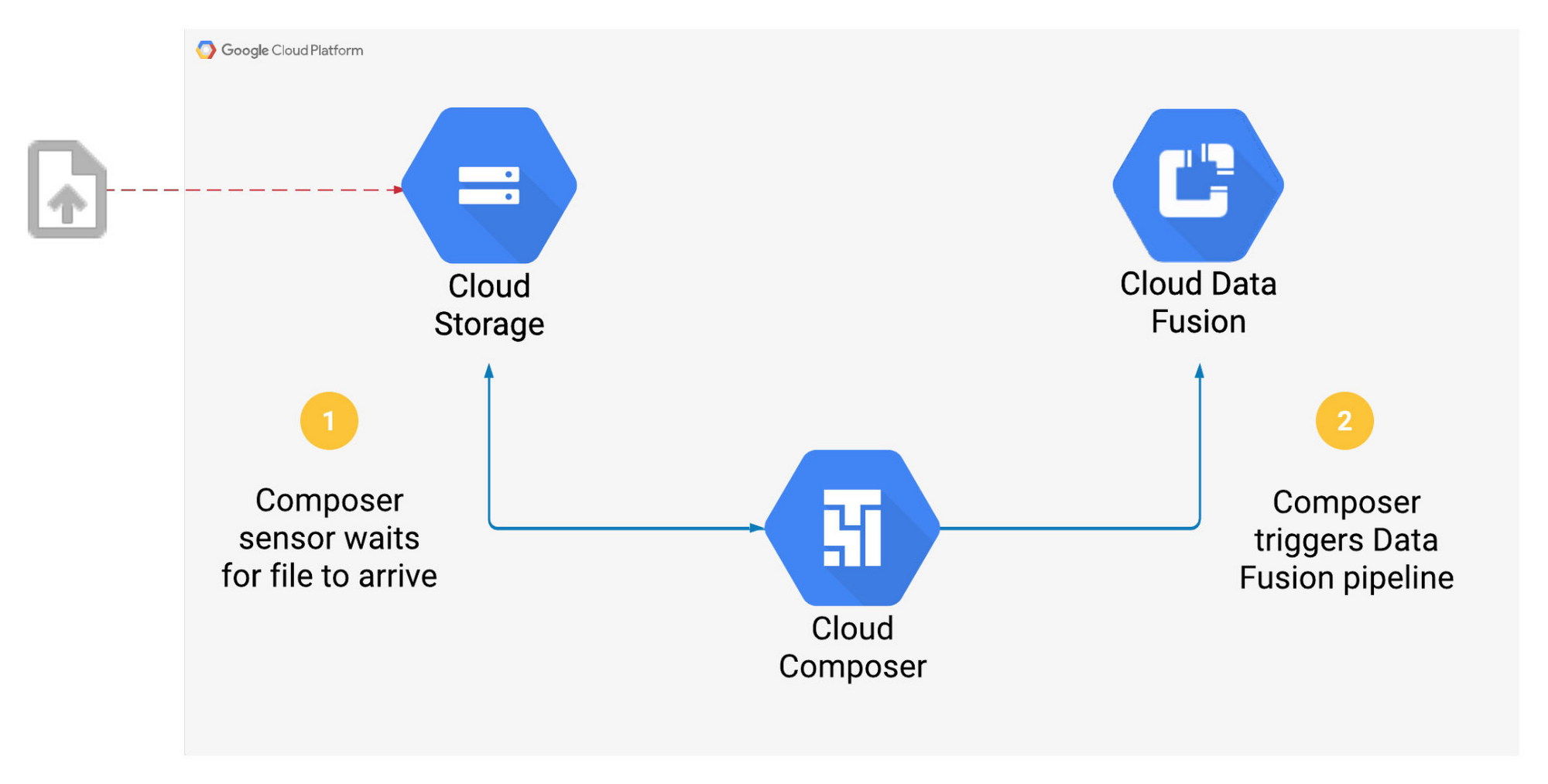

View the results of the wordcount job in storage.įor each lab, you get a new Google Cloud project and set of resources for a fixed time at no cost. View and run the DAG (Directed Acyclic Graph) in the Airflow web interface Use GCP Console to create the Cloud Composer environment You then use Cloud Composer to go through a simple workflow that verifies the existence of a data file, creates a Cloud Dataproc cluster, runs an Apache Hadoop wordcount job on the Cloud Dataproc cluster, and deletes the Cloud Dataproc cluster afterwards. In this lab, you use the GCP Console to set up a Cloud Composer environment. In Google Cloud Platform (GCP), the tool for hosting workflows is Cloud Composer which is a hosted version of the popular open source workflow tool Apache Airflow. This custom version is based on the public version 6.8.0.Workflows are a common theme in data analytics - they involve ingesting, transforming, and analyzing data to figure out the meaningful information within. The delegate_to param remains available only in Gsuite and marketing platform hooks and operators that don't interact with Google Cloud.įor a full list of changes in the apache-airflow-providers-google, see the changelog from version 8.9.0 to 10.1.1 on the apache-airflow-providers-google page.Ĭloud Composer uses a custom version of the apache-airflow-providers-google package, 2022.5.18+composer. Impersonation can be achieved by utilizing the impersonation_chain parameter instead. In this version of the provider package, the deprecated delegate_to parameter is removed from all GCP operators, hooks, and triggers, as well as from Firestore and Gsuite transfer operators that interact with GCS.protobuf=4.22.5 is included, this is the first Cloud Composer version with protobuf version 4.x.Google Ads default API changed from version 12 to 13.This version is based on the public version 10.1.1, with additional fixes to some operators and upgrades to many SDK package dependencies (such as protobuf). The apache-airflow-providers-google package in images with Airflow 2.5.1 and 2.4.3 was upgraded to version 2023.6.6+composer. New Dataplex operators handle creating, updating, getting, deleting and running a Data Quality scan, getting a Data Quality Scan job, creating and deleting a zone, as well as creating and deleting an asset. Dataplex is an intelligent data fabric that provides unified analytics and data management across your data lakes, data warehouses, and data marts.

The provided Cloud Batch Operators enable submitting, listing and deleting batch jobs as well as listing a job's tasks.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed